1. The Algorithmic Dawn (Pre-2015)

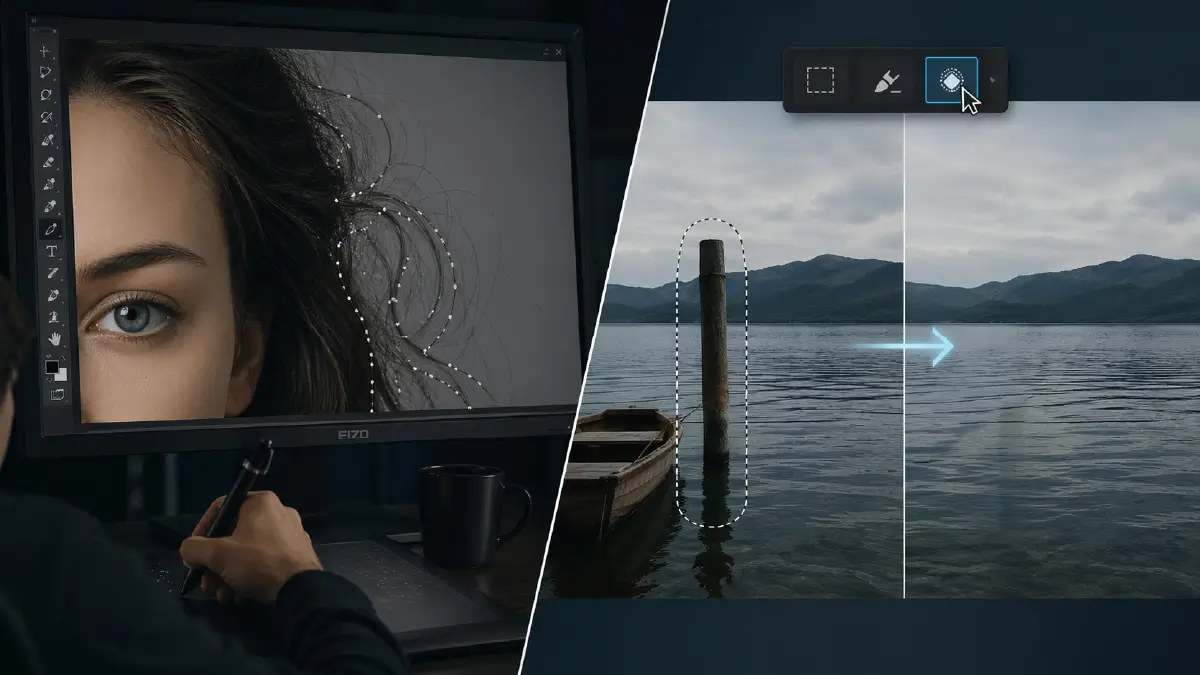

I still remember the night I lost three hours to a single strand of hair.

Beauty campaign, global rollout, twenty-something me hunched over a 24-inch Eizo, painstakingly tracing flyaway hairs with the Pen tool at 300% zoom. Every anchor point felt like a tiny insult. Client wanted it “perfect, but natural,” which is code for: fix everything and make sure no one can tell you did. When I finally finished the mask, my hand buzzed like I’d been playing guitar for a stadium instead of wrestling with a fringe.

A few years later, I watched Photoshop CS5’s Content-Aware Fill demo and felt something between admiration and mild existential dread. Draw a loose selection, hit a button, and the software just…guessed the background. No careful cloning, no elaborate layer stacks, no rebuilding texture by hand. That was the first time I thought, “Okay, the machine isn’t just helping anymore. It’s starting to think.”

Back then, no one in the studio called it AI. It was just “the new tool in CS5.” But that’s where this whole story really starts.

1.1 Early computational “helpers”

Before 2010, our so‑called “smart” tools were mostly glorified math tricks: Auto Levels, Auto Contrast, Auto Color, basic algorithmic masking, and edge detection. They ran fixed formulas over the pixels—histograms, edge maps, thresholding—and spat out a result. Useful sometimes, but dumb as bricks. You learned quickly: never trust Auto anything on a paying job.

Even the early Content-Aware tech was essentially pattern analysis and patch synthesis, not machine learning in the modern sense. It examined surrounding pixels and tried to infer what should fill the gap when you removed an object. It felt magical on a demo stage and “pretty good, needs cleanup” in production, but for a working retoucher, it wasn’t replacing the craft. It was accelerating the boring bits.

We still built dodge and burn by hand, painted masks on a Wacom, and ran frequency separation with custom actions. The goal was clear: speed up manual labor, not bypass it. Nobody worried about the job disappearing. We were just grateful that cloning out telephone wires no longer took an entire afternoon.

2. The Invisible Assistants (2015–2020)

Around the mid‑2010s, the language changed. Suddenly, every plugin promised “AI-powered” something. This wasn’t just smarter heuristics; neural networks started creeping into everyday tools.

2.1 From presets to real machine learning

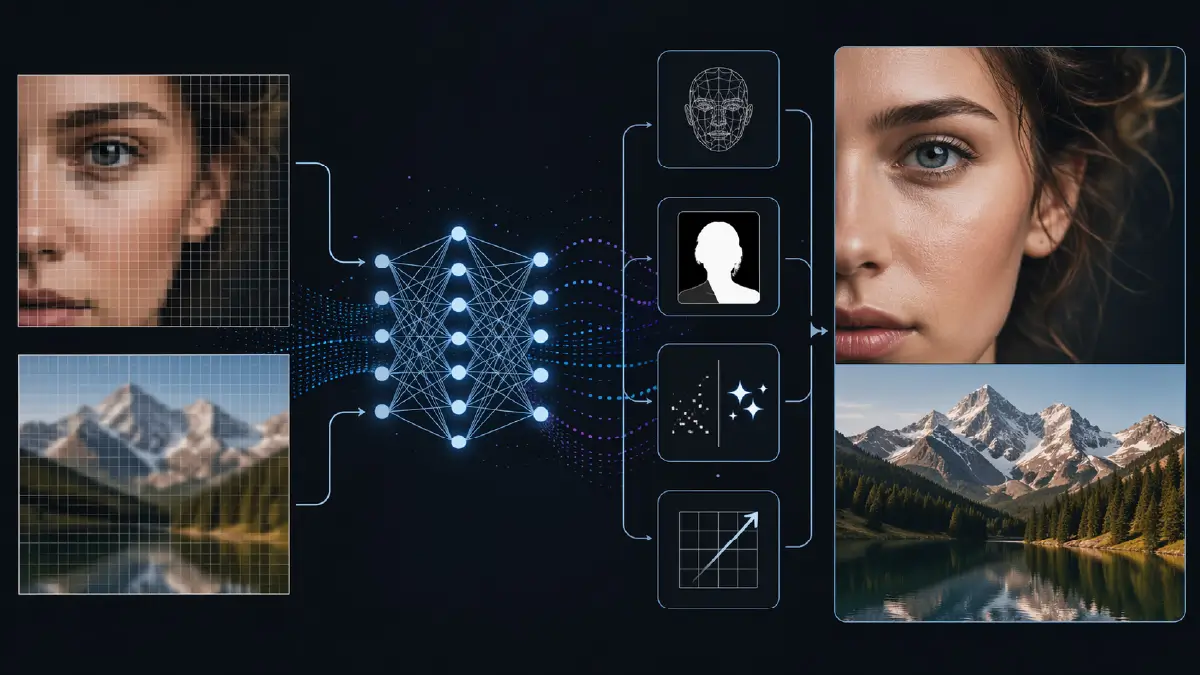

Companies like Topaz Labs leaned hard into AI denoising and machine learning upscaling. Topaz Gigapixel AI sold itself as an image upscaler that didn’t just add pixels; it added the right pixels, reconstructing plausible detail from low‑resolution sources. For once, that marketing line wasn’t completely delusional. When you compared its output to traditional bicubic upscaling, the difference was brutal: eyelashes, pores, fabric weave—suddenly they were there again, or at least convincing hallucinations.

Under the hood, these tools used trained models—early cousins of today’s GANs (Generative Adversarial Networks) and other deep learning architectures—to map low‑res inputs to high‑res outputs. The system lived in latent space, a compressed representation where “sharpness,” “noise,” and “texture” became directions you could move along, even if the user never saw the math.

We also got AI noise reduction that could distinguish between sensor noise and actual detail far better than the old sliders. You could rescue underexposed event work or high‑ISO lifestyle shoots that would previously have been write‑offs.

2.2 Algorithmic masking grows teeth

In parallel, selection tools evolved. Photoshop’s Select Subject and related features leaned on machine learning‑based segmentation to identify people, skies, hair, and objects in a single click. These models were trained on huge datasets of labeled images, learning what a “person” or “sky” looked like statistically rather than from a hand‑coded rule set.

For a working retoucher, this was a quiet revolution. Those tedious masks around curly hair, complex product cutouts, or fabric edges suddenly started from a 70–80% solution instead of zero. You’d still refine with channel-based masks, manual brushing, or more frequency separation work on edges—but the time savings added up to a different kind of day.

2.3 Specialized AI plug‑ins: the ghost workforce

By the late 2010s, a whole ecosystem of specialized tools had emerged:

- AI skin smoothing and portrait plugins (including early versions of tools like Retouch4Me) learned the difference between blemishes, pores, and makeup, making “plastic skin” much easier to avoid.

- ML‑driven upscalers and denoisers became standard for wedding, event, and e‑commerce workflows.

- Background removal services used deep learning to auto‑cut subjects for catalog work, social media, and marketplaces.

These were the invisible assistants. They didn’t scream “look at this new medium,” they just quietly saved retouchers thousands of hours of drudge work. The craft was still there—taste, restraint, understanding of light—but the machine handled more of the grunt work in the background.

3. The Consumer Filter Boom

Then the dam broke.

While professionals were arguing about whether AI denoising was “cheating,” the rest of the world discovered beauty filters. Suddenly, what required a skilled retoucher and a calibrated monitor in 2008 became a single tap on a smartphone: slimmer jawline, bigger eyes, smoother skin, new hair color.

3.1 Snapchat, FaceApp, TikTok and the new face

Apps like Snapchat normalized face filters as a form of casual play—dog ears, rainbow vomit, soft beauty looks. Then FaceApp arrived and upped the stakes with hyper‑realistic age progression, gender swaps, and “perfected” faces using advanced deep learning models. These systems used network architectures similar to GANs to convincingly map one face state into another: young to old, male to female, plain to glamorous.

At the same time, TikTok and Instagram embraced AR and AI filters that reshaped faces in real time: nose size, lip volume, skin texture, even bone structure. This wasn’t subtle retouching; it was live‑action identity editing.

What once belonged to high‑end editorial and advertising retouching—sculpting cheekbones, cleaning skin, slightly widening eyes—became default camera behavior for millions of people.

3.2 Reality distortion as a consumer feature

This consumer boom changed the cultural relationship with retouching. For the general public, AI editing was no longer a hidden post-production step; it was a default lens on reality. You didn’t think, “I’ll retouch that later.” You thought, “Which filter makes me the version of myself I can tolerate?”

At the same time, serious concerns emerged about body image, bias, and beauty standards. When the average teenager sees their real face less often than their AI‑filtered one, the line between photograph and wishful self‑portrait starts to dissolve. Researchers and critics pointed out how apps like FaceApp encode and reinforce certain aesthetic norms in their “improvements.”

Professionally, clients started asking for the “FaceApp vibe,” expecting skin and facial features that existed more in latent space than in real life. The bar for “normal” beauty shifted toward whatever the algorithm made ubiquitous.

4. The Generative Explosion (2022–Present)

If the filter boom bent reality, generative AI snapped it outright.

4.1 Stable Diffusion, Midjourney, and the prompt era

Around 2022, models like Stable Diffusion and Midjourney turned “AI art” from a research curiosity into an everyday tool. Stable Diffusion v1 and later v2 were released as open-source text-to-image models, allowing anyone with a decent GPU to generate high‑quality imagery from a prompt. The barrier between idea and image collapsed to a sentence and a progress bar.

These models work in a high‑dimensional latent space, where images are compressed into numerical representations. Starting from noise, they iteratively denoise toward an image that matches the text prompt, guided by learned associations between words and visual patterns. No pixels are “edited” in the traditional sense; they’re synthesized from statistical memory.

Meanwhile, Midjourney built a cult around its painterly style and Discord‑driven workflow. Art directors who once scribbled thumbnails in notebooks started iterating campaign looks with prompts instead.

4.2 Adobe Firefly and Generative Fill: the moment the walls moved

The real existential gut punch for retouchers landed when Adobe wired generative models straight into Photoshop<span style=”font-weight: 400;”>. Adobe Firefly, announced in 2022 and released to Creative Cloud users after its public beta in March 2023, became the engine behind many of Adobe’s generative features.

Generative Fill took the old Content-Aware Fill idea and injected a text‑guided brain into it. Instead of just inferring background from surrounding pixels, you could type instructions:

- “Remove person on left, extend background.”

- “Add soft window light from the right.”

- “Replace sky with dramatic sunset.”

The machine didn’t just patch; it invented—objects, lighting, architecture, entire scenes—based on its training data and the prompt.

For the first time, a creative director could sit at the keyboard and type, “remove background and add a Parisian cafe,” and get a convincing composite without ever calling their usual retoucher. That’s not a workflow tweak; that’s a paradigm shift.

4.3 The existential crisis of the retoucher

If your identity for twenty years has been “the person who can realistically remove, add, and reshape anything in an image,” watching a prompt bar do a passable version in ten seconds is…educational.

You start asking unpleasant questions:

- If the machine can generate perfect product reflections, who needs my painstaking pathwork and gradient builds?

- When a client wants a background change, do they still value my nuanced color matching and the way I respect the original lighting?

- If stock agencies accept AI-generated content alongside photography, what exactly is my raw material now?

But here’s the thing most of the hype misses: generative tools are reckless interns, not seasoned supervisors. They hallucinate logos, break perspective, invent anatomically cursed hands, and ignore brand guidelines. They don’t understand usage rights, client politics, legal restrictions, or taste. That gap between “good enough for Instagram” and “safe for a global campaign” is still where professionals live.

5. Trust, Truth, and the Human Element

As the tools got smarter, the images got less trustworthy.

5.1 Kategate and the collapse of casual trust

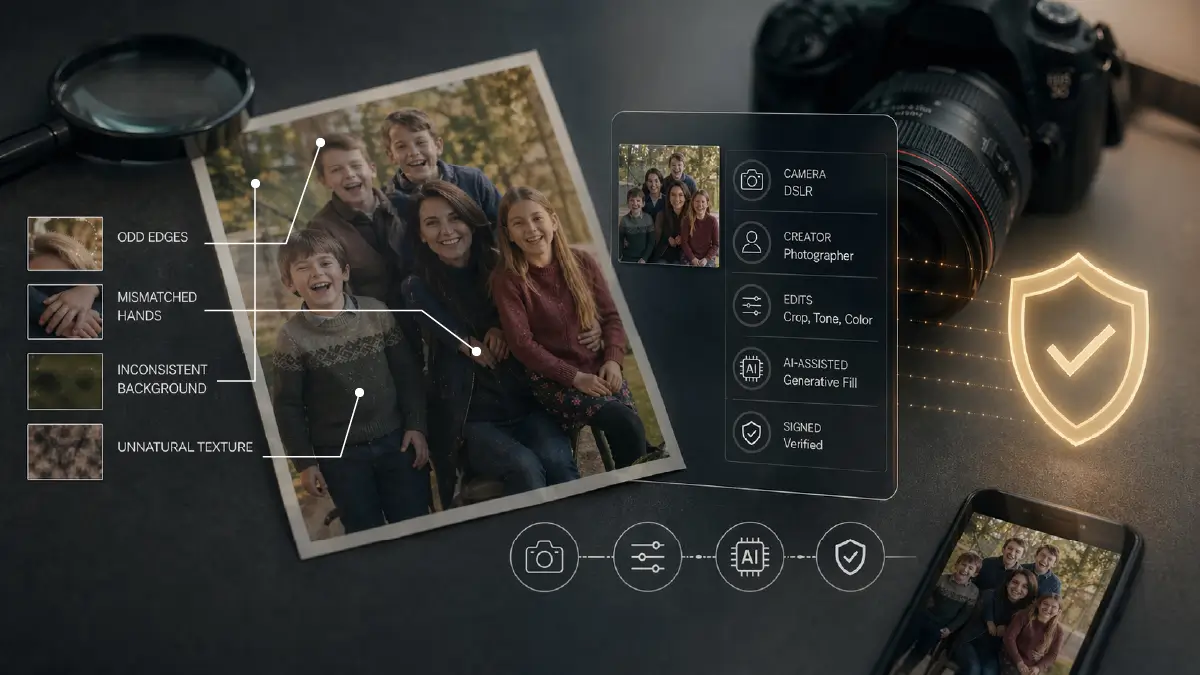

In March 2024, a seemingly wholesome Mother’s Day photo of Catherine, Princess of Wales, and her children blew up into “Kategate” when major news agencies pulled the image, citing signs of manipulation. The palace later admitted that Kate had edited the photo herself.

On a technical level, the edits weren’t extraordinary—odd edges, mismatched hands, small inconsistencies. But culturally, the reaction was revealing. People didn’t just say, “Oh, bad retouch.” They said, “What else is fake? What are we being shown? What’s being hidden?” A single clumsy edit cracked the thin shell of trust around official imagery.

And that’s the environment we’re working in now: a world where audiences assume everything—from candid celebrity photos to war footage—might be AI‑altered or fully generated.

5.2 Photograph vs. image

We’ve hit a philosophical choke point: what counts as a photograph anymore?

- If you clean skin with frequency separation and a bit of dodge and burn, most people still consider it a photo.

- If you remove an exit sign, extend the background, and swap the sky, some will grumble but accept it as industry standard.

- If you replace half the scene using Generative Fill or synthesize a subject with Stable Diffusion, are you still dealing with a photograph, or did you cross into pure image-making?

The pixels don’t care. Only the intent and the disclosure do.

Clients are beginning to ask for clarity: Was this shot in camera? Was the product rendering based on real geometry or hallucinated? Are the models real people or generated composites? Legal teams worry about defamation, false advertising, and IP infringement. Viewers—after scandals like Kategate—are more suspicious than ever.

5.3 C2PA and Content Credentials: trust as metadata

That’s where initiatives like C2PA (Coalition for Content Provenance and Authenticity) come in. Founded by organizations including Adobe, Microsoft, and others, C2PA defines a standard for cryptographically bound provenance data attached directly to media.

Adobe’s implementation, Content Credentials, lets cameras and editing tools embed a tamper‑evident record of what happened to an image—who created it, what edits were made, whether AI was involved. The idea is simple: if we can’t stop people from altering images, at least we can mark which ones are telling the truth about their own manipulation.

This doesn’t magically fix anything. Most users will ignore it; bad actors will strip metadata. But for professional work—editorial, news, commercial—it’s a way to say, “We’re not asking you to trust the pixels. We’re asking you to trust the process behind them.”

5.4 Taste, restraint, and responsibility

Ironically, as the technical barrier drops, taste becomes more critical. When anyone can type “perfect skin, cinematic lighting, blue hour, 85mm depth of field” and get something convincing, the question shifts from “Can we?” to “Should we?”

What separates a pro now:

- Knowing when to leave imperfection—a wrinkle, a scar, a stray hair—because it tells the right story.

- Understanding that pushing a model’s features toward some AI‑averaged standard of beauty can cross ethical lines.

- Recognizing when generative replacement crosses into misrepresentation or cultural insensitivity.

- Being willing to disclose: “Yes, this background is generated.” “Yes, we composited three frames.” “Yes, that’s a CG product render.”

The most valuable skills in the room are suddenly art direction, ethical judgment, and creative restraint, not how precisely you can paint on a layer mask.

6. Final Thought

When I started, perfection was a slog. You earned every pore and every highlight with muscle memory and late nights. The craft was in the grind.

Now, I can type a prompt and watch the machine conjure in fifteen seconds what used to take me fifteen hours. It’s outrageous. It’s horrifying. It’s intoxicating.

But here’s the uncomfortable truth: the desire at the core of all this hasn’t changed at all. Clients still want the product to look better than it does in real life. People still want to see a version of themselves that’s just a little kinder than the mirror. Brands still chase an idealized world where everything is on-message and on-model.

We went from pixels to prompts, from brushes to latent space, but the hunger behind the retouch is the same: polish the real into something just beyond reach. The tools have finally caught up with the fantasy. The real question is whether we’ve grown enough—as artists, as clients, as viewers—to decide when to stop asking them to.

Key Takeaways

- Early “AI” tools like Content-Aware Fill were algorithmic accelerators, not true machine learning, designed to reduce manual grunt work, not replace the retoucher.

- From 2015–2020, neural networks quietly embedded themselves into workflows via AI denoising, upscaling, and automated masking, acting as invisible assistants that saved thousands of hours.

- The consumer filter boom—Snapchat, FaceApp, TikTok—turned extreme retouching into a casual habit and reshaped public expectations of how faces “should” look.

- The generative explosion with Stable Diffusion, Midjourney, Adobe Firefly, and Generative Fill shifted the game from editing pixels to generating them, triggering a real identity crisis for retouchers.

- Scandals like Kategate and standards like C2PA/Content Credentials show that the industry is now wrestling with authenticity, provenance, and disclosure as core responsibilities, not side issues.